In the United States, an estimated 85% of the population has at least one medical encounter, while 25% of these people have as many as 8 to 9 encounters, annually. A single visit involves a multidisciplinary team of healthcare professionals, administrative staff, patients, and their families or friends. Often such an encounter results in multiple visits to other clinicians or services in multiple organizations using different medical records. Fragmented care has been identified as a common contributor to medical errors. Studies have also indicated that more than half of adverse events in healthcare are attributable to surgical care, most of which are deemed preventable. Furthermore, root cause analyses have associated patient outcomes with non-technical aspects of performance, such as teamwork and safety culture, and not to a mere lack of technical expertise. The effective delivery of surgical care requires the orchestration of a multidisciplinary team working in harmony in a time-sensitive and complex environment. Any breakdown in these interdependent processes can potentially lead to performance failures and adverse events. In an interview study of surgeons, communication was found to be a causal factor in 43% of errors made in surgery.

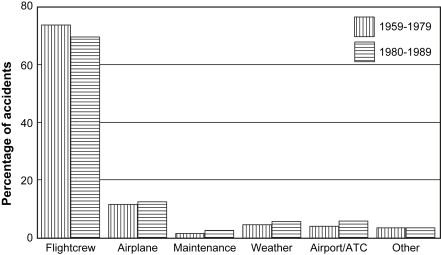

The aviation industry has demonstrated the importance of effective teamwork on flight safety and has verified the effectiveness of specific team training to improve safety. As aircraft systems became more complex in the 1970s, they noted that more accidents were occurring (Fig. 1) and conducted a formal investigation to identify root causes so they could reduce the number of accidents.

Source: Helmreich, R. L., & Foushee, H. C. (2010). Chapter 1 Why CRM? Empirical and Theoretical Bases of Human Factors Training. In 918058059 721337967 B. Kanki (Ed.), Crew Resource Management (2nd ed., p. 7). New York, NY: Elsevier.

The record of the investigations provides alarming documentation of ways in which crew coordination failed at critical moments.

The following are human errors caused by interpersonal miscommunication (Helmreich & Foushee, 2010):

As a result of this investigation, the focus shifted from individual issues to crew-level issues that compromised safety; it was a significant achievement in our understanding of what determines safety in flight operations. Just as performance can suffer because of poor technology or inadequate training, so too can system effectiveness be reduced by errors in the design and management of crew-level tasks and of organizations.

Beginning in 1980, NASA endorsed Cockpit Resource Management (CRM, later named Crew Resource Management). CRM focuses on interpersonal communication, leadership, and decision making and is used in environments where human error can have devastating effects. It is regarded as a countermeasure with three main goals:

Adapted from: https://flightsafety.org/files/bass/2015/proceedings/Walala.pdf

Authored by Cindy Ebner, MSN, RN, CPHRM, FASHRM

If you are struggling with a concept or terminology in the course, you may contact RiskManagementSupport@capella.edu for assistance.

If you are having technical issues, please contact learningcoach@sophia.org.