Table of Contents |

A distribution of sample means is a distribution that shows the means from all possible samples of a given size.

EXAMPLE

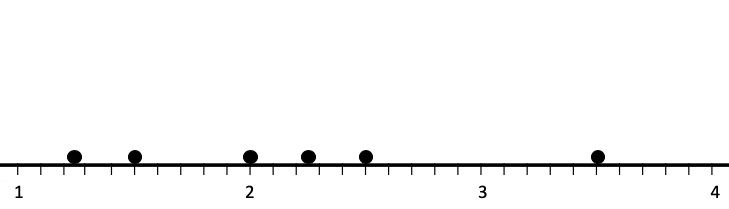

Consider the spinner shown here:

| Sample | Mean |

|---|---|

= {2, 4, 3, 1} = {2, 4, 3, 1}

|

= 2.50 = 2.50

|

= {1, 4, 3, 1} = {1, 4, 3, 1}

|

= 2.25 = 2.25

|

= {4, 2, 4, 4} = {4, 2, 4, 4}

|

= 3.50 = 3.50

|

= {2, 2, 3, 1} = {2, 2, 3, 1}

|

= 2.00 = 2.00

|

= {3, 1, 1, 1} = {3, 1, 1, 1}

|

= 1.50 = 1.50

|

= {1, 1, 1, 2} = {1, 1, 1, 2}

|

= 1.25 = 1.25

|

EXAMPLE

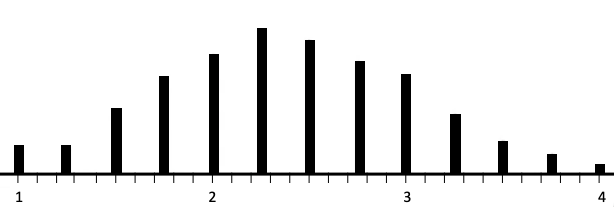

For the spinner above, the following are the histograms for a sample size of 1 spin, 4 spins, 9 spins, and 20 spins.

|

|

|

|

|

|

|

|

EXAMPLE

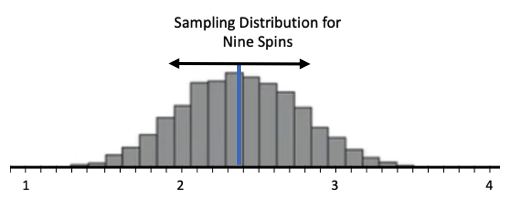

How about the spread? The arrows on each of the histograms below indicate the standard deviation of each distribution.

|

|

|

|

EXAMPLE

Lastly, let's discuss the measured center, or measured spread, and describe the shape of these distributions. You'll notice that the shape is becoming more and more like the normal distribution as the sample size increases. There's a theorem that describes that, called the central limit theorem.Source: THIS TUTORIAL WAS AUTHORED BY JONATHAN OSTERS FOR SOPHIA LEARNING. PLEASE SEE OUR TERMS OF USE.